The three(ish) levels of QEMU VM graphics

I enjoy tinkering with VMs and consider QEMU to be one of the sharpest tools in my shed. In recent years, great progress in QEMU itself and in Linux device drivers has made VMs more powerful and more convenient than ever. Modern Linux distributions are ready to take advantage of those out of the box. However, it’s not always easy to figure out what the options are and what they offer. Here are some of my notes on graphics support in QEMU guests.

Test environment

I’m running qemu-system-x86_64 4.2.0 on the host and Ubuntu 20.04 LTS Beta as guest. I will be using QEMU command line options as I find that easier to understand than libvirt XML files.

Since I’m focusing on graphics support here, I’ll keep unrelated options to minimum. Instructions assume that the OS has been installed to ubu.qcow2.

Level 0: -vga std

qemu-system-x86_64 \

-enable-kvm \

-smp 4 \

-m 8G \

ubu.qcow2

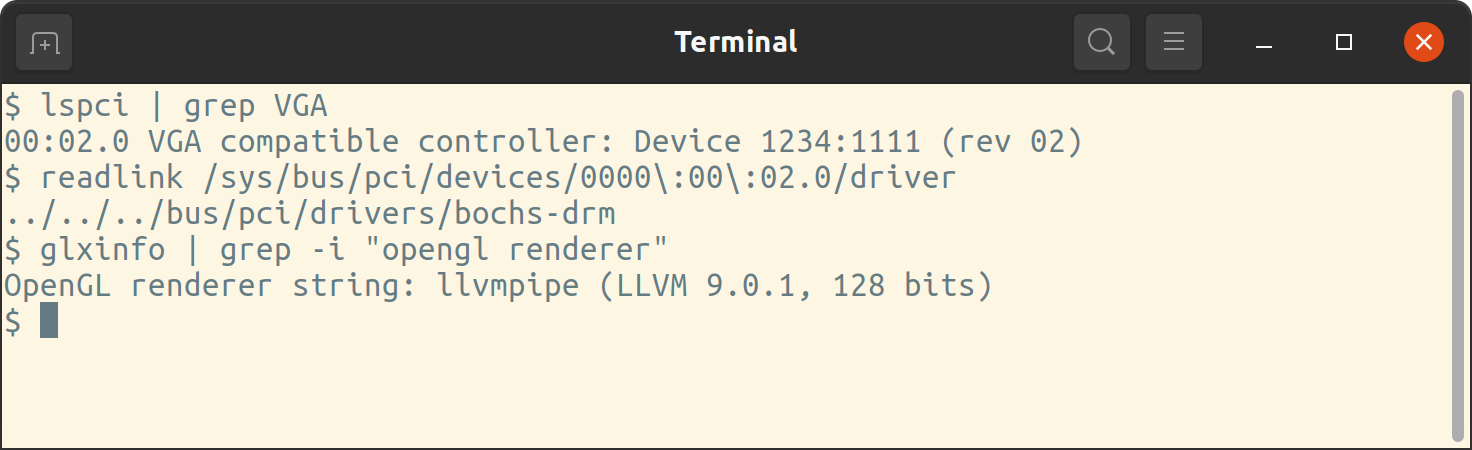

Not much to say here. If no display options are provided, QEMU defaults to -display gtk and -vga std. The std device is handled in the guest by the bochs-drm driver. It supports resolutions up to 1920x1080 and is pretty slow.

Level 1: -vga virtio

qemu-system-x86_64 \

-enable-kvm \

-smp 4 \

-m 8G \

-vga virtio \

ubu.qcow2

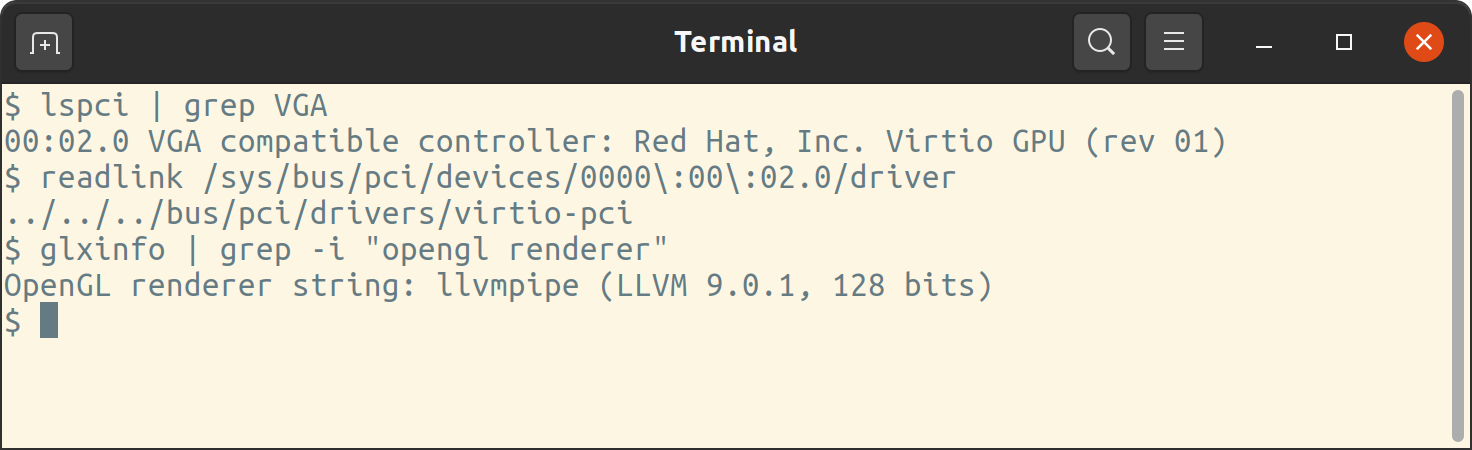

This is where things start to get interesting. The para-virtualized virtio-gpu driver scales up to 4096x2160 and can adjust resolution to match the QEMU window. This allows arbitrary resolutions like 1337x555 and makes the guest OS feel like a GUI application. However, graphics-heavy DEs are only slighly faster than std, since any OpenGL operations are still handled by the llvmpipe software driver.

Level 2: gl=on

qemu-system-x86_64 \

-enable-kvm \

-smp 4 \

-m 8G \

-vga virtio \

-display gtk,gl=on \

ubu.qcow2

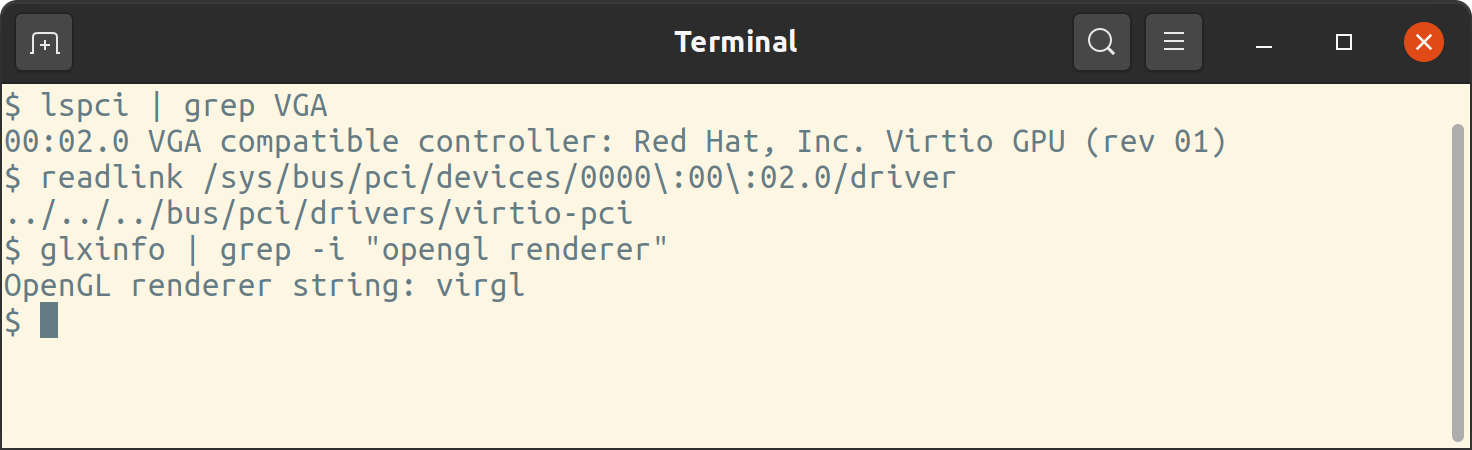

Combining -vga virtio with gl=on gives the most interesting results so far. We get all the benefits of virtio-gpu, now with accelerated OpenGL provided by the virgl Mesa driver. This is more para-virtualization in action, as virgl effectively uses the host GPU to accelerate 3D.

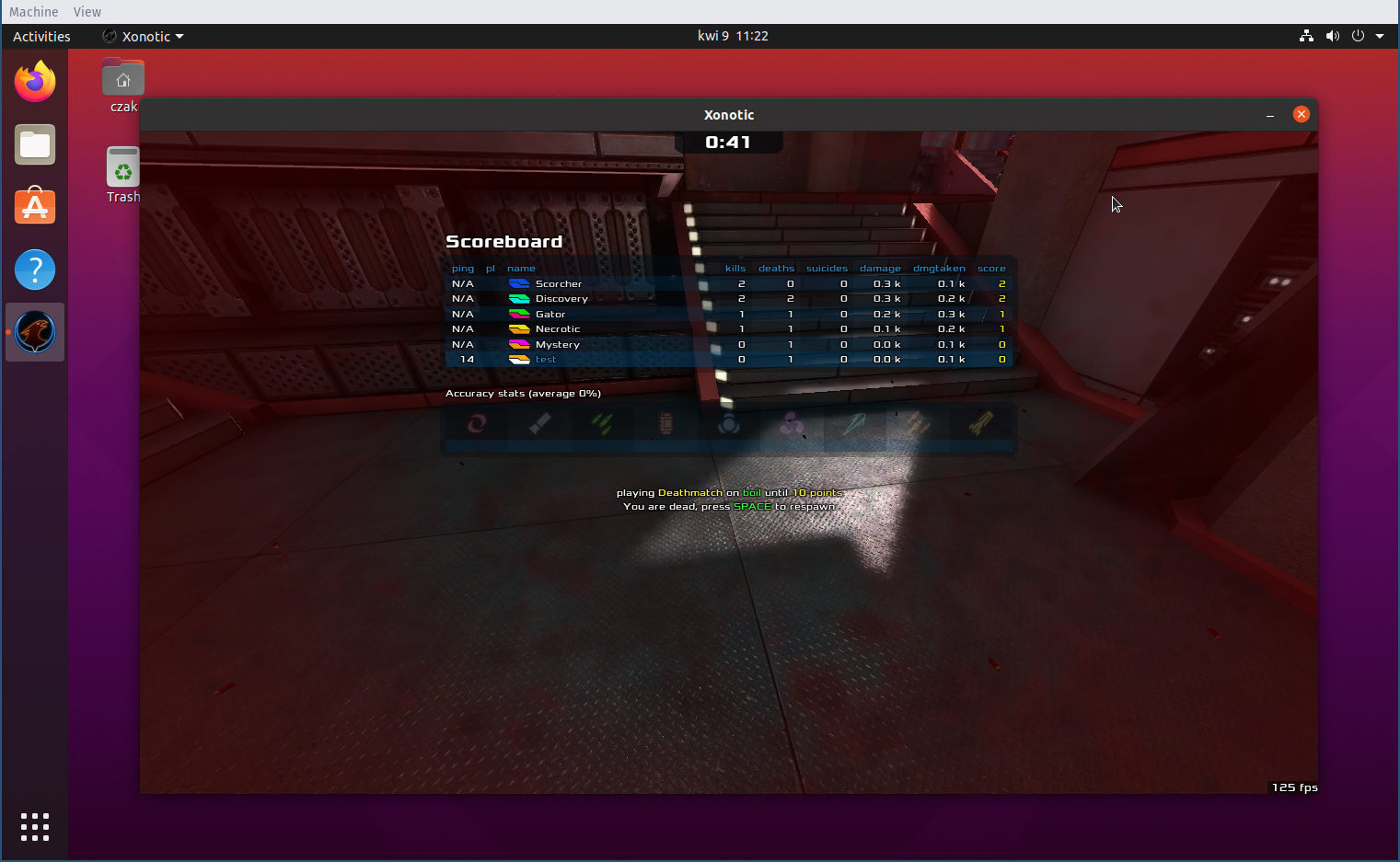

The difference is already noticeable in Ubuntu’s Gnome desktop. But the OpenGL support is perfectly capable of 3D graphics.

Level 10: GPU passthrough

Some would have you believe that this is some VM holy grail. That you can only get it working with two GPUs and you’re better off dual booting, switching to Windows or picking up gardening instead. I won’t argue otherwise - I want my bragging rights after all. I have only one RX480 and no iGPU so this should be fun. Undeterred, I exit Xorg, unbind amdgpu and stare at the black screen…

#!/bin/sh

GPU="0000:0d:00.0"

GPU_AUDIO="0000:0d:00.1"

echo "$GPU" > /sys/bus/pci/drivers/amdgpu/unbind

echo "$GPU_AUDIO" > /sys/bus/pci/drivers/snd_hda_intel/unbind

echo vfio-pci > /sys/bus/pci/devices/$GPU/driver_override

echo vfio-pci > /sys/bus/pci/devices/$GPU_AUDIO/driver_override

modprobe vfio-pci

qemu-system-x86_64 \

-enable-kvm \

-smp 4 \

-m 8G \

-nographic \

-vga none \

-device vfio-pci,host=$GPU,x-vga=on \

-device vfio-pci,host=$GPU_AUDIO \

ubu.qcow2

Yes this is a different beast. The moment you unbind amdgpu, the host becomes headless. Until you get a reliable script, you will want to test this over SSH.

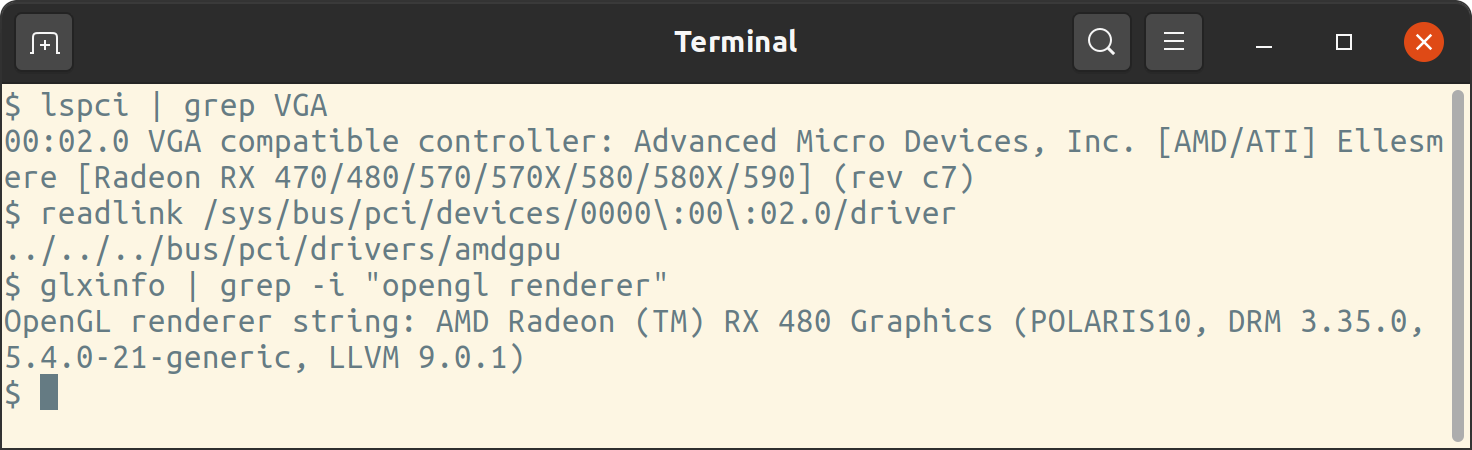

When the guest boots up, it has full access to the host GPU:

This deserves some more commentary.

- In my system,

$GPUand$GPU_AUDIO(the HDMI audio controller) are the only members of IOMMU group 13. - Both need to be unbound from their drivers before passing them to the VM via

-device vfio-pci. x-vga=onseems to be required if you’re using SeaBIOS (as we are above). See below for an alternative.- After

qemuterminates, you will want the script to unbindvfio-pciand rebind the host device drivers. - I struggled to get manual

bindandunbindworking, as described by Greg Kroah-Hartman. The approach withdriver_overrideandmodprobehas been more reliable for me. - You might also want to pass through keyboard and mouse to the VM. I’ve omitted it here for clarity. To pass through my USB keyboard, I use

-usb -device usb-host,vendorid=0x04d9,productid=0x0192.

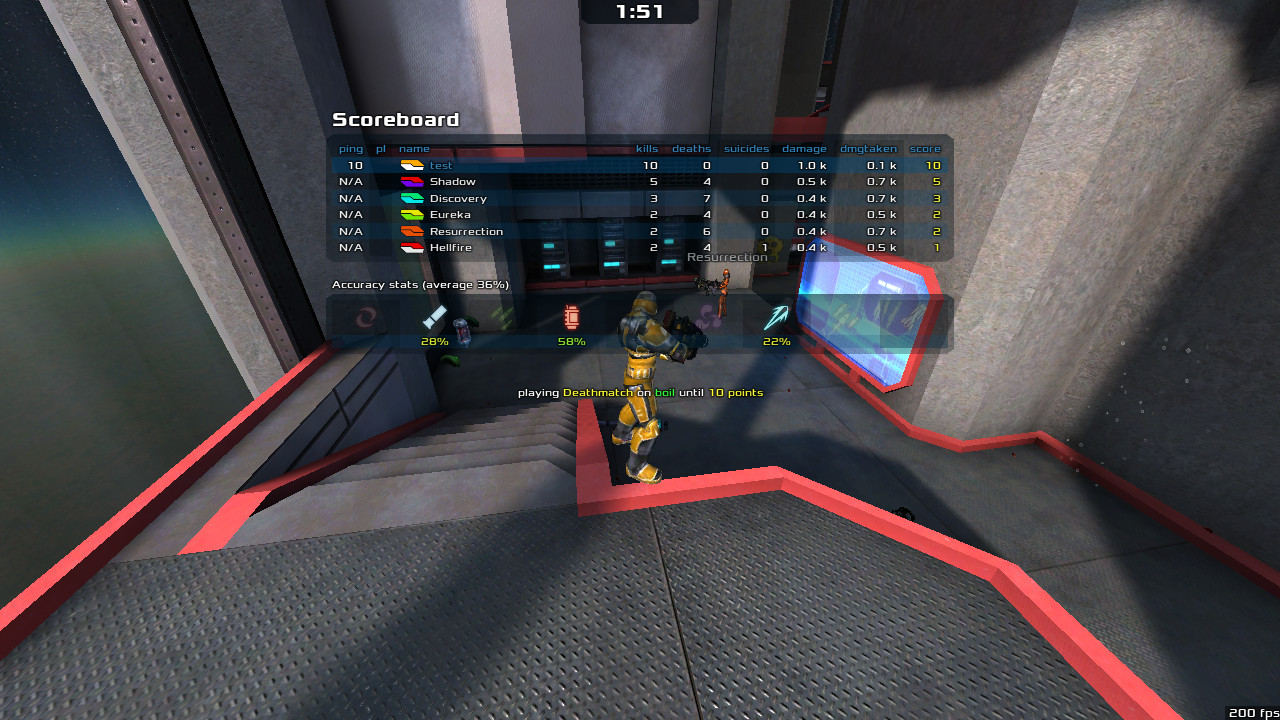

The result is virtually indistinguishable from bare metal. And I finally get the score right:

About OVMF

It’s not all roses unfortunately. The above script works, but not consistently. I’m only able to run the passthrough VM once per reboot. After that, I keep getting Unable to power on device, stuck in D3. A more reliable setup uses the OVMF firmware. This way I can run the VM and Xorg multiple times in the day without rebooting the host.

We need to obtain OVMF and run QEMU thus:

qemu-system-x86_64 \

-enable-kvm \

-smp 4 \

-m 8G \

-machine q35 \

-drive if=pflash,format=raw,readonly,file=firmware/OVMF_CODE.fd \

-drive if=pflash,format=raw,file=firmware/OVMF_VARS.fd \

-nographic \

-vga none \

-device vfio-pci,host=$IOMMU_GPU \

-device vfio-pci,host=$IOMMU_GPU_AUDIO \

ubu-efi.qcow2 \

Thoughts

I can’t even grasp the mountain of software which makes all this possible. I’m just glad it’s there. I stopped distro-hopping a long time ago and have only been VM-hopping since. The range of features of QEMU and the quality of Linux drivers make VMs feel like a superpower. And there’s plenty more still to explore, e.g. vfio-mdev. That will have to come next.